Export Data From Prometheus into any Database

Learn how to easily export data from Prometheus into other popular databases

A technical data engineer / passionate technologist who loves tackling interesting problems with code, exploration & persistence.

Introduction

Sling aims to augment the exporting/loading data process into a positive and potentially enjoyable experience. It focuses on 3 of data types interfaces:

- From File Systems to Databases

- From Databases to Databases

- From Databases to File Systems

The list of connections that Sling supports continues to grow. You can see the full list here, but it supports all the major platforms including Clickhouse, DuckDB, Google BigQuery, Google BigTable, MariaDB, MongoDB, MotherDuck, MySQL, Oracle, PostgreSQL, Prometheus, Redshift, Snowflake, SQL Server, SQLite, StarRocks and Trino.

Prometheus

Prometheus is an open-source time-series database originally built by SoundCloud. It is a powerful and flexible monitoring solution ideal for gathering, querying, and alerting on metrics data in dynamic and distributed environments. Its pull-based model, multi-dimensional data model, PromQL query language, and alerting capabilities make it a popular choice for monitoring modern cloud-native applications and infrastructure.

Export data from Prom

An issue faced dealing with Prometheus is the difficulty in exporting data from it. Fortunately, Sling handles this without a skip.

Install Sling

See here for details on how to install Sling. It is usually a simple command, such as:

# On Mac

brew install slingdata-io/sling/sling

# On Windows Powershell

scoop bucket add org https://github.com/slingdata-io/scoop-sling.git

scoop install sling

# On Linux

curl -LO 'https://github.com/slingdata-io/sling-cli/releases/latest/download/sling_linux_amd64.tar.gz' \

&& tar xf sling_linux_amd64.tar.gz \

&& rm -f sling_linux_amd64.tar.gz \

&& chmod +x sling

You should be able to run the sling command at this point.

Set up Connections

Let's now set up the connections. In this blog post, we will use Postgres as the destination database, however the steps are the same if you'd like to load into a different database.

Setting up Prometheus

See here for more details on the keys accepted. But we can simply do:

$ sling conns set prometheus type=prometheus http_url="http://localhost:9090" api_key="xxxxxxxxxxxxxxxxxxxxxx"

$ sling conns test prometheus

6:01PM INF success!

# get list of metrics

$ sling conns discover prometheus --column

+------------+------------+------------+-----+----------------------------------+-------------+--------------+

| DATABASE | SCHEMA | TABLE | ID | COLUMN | NATIVE TYPE | GENERAL TYPE |

+------------+------------+------------+-----+----------------------------------+-------------+--------------+

| prometheus | prometheus | prometheus | 1 | go_gc_duration_seconds | summary | bigint |

| prometheus | prometheus | prometheus | 2 | go_goroutines | gauge | bigint |

| prometheus | prometheus | prometheus | 3 | go_info | gauge | bigint |

| prometheus | prometheus | prometheus | 4 | go_memstats_alloc_bytes | gauge | bigint |

| prometheus | prometheus | prometheus | 5 | go_memstats_alloc_bytes_total | counter | bigint |

| prometheus | prometheus | prometheus | 6 | go_memstats_buck_hash_sys_bytes | gauge | bigint |

| prometheus | prometheus | prometheus | 7 | go_memstats_frees_total | counter | bigint |

.....

Setting up Postgres

Similarly, we will again use the sling conns set command, this time for our PG connection. See here for more details.

$ sling conns set postgres url="postgresql://postgres:postgres@localhost:5432/postgres?sslmode=disable"

$ sling conns test postgres

6:03PM INF success!

Great, we are now ready to move data!

Run with CLI Flags

# export results to stdout, with start time from 2 months ago: "now-2M"

$ ./sling run --src-conn prometheus \

--src-stream 'sum(go_gc_duration_seconds) by (job, instance, quantile) # {"start": "now-2M"}' \

--stdout --limit 10 -d

# load into PG

$ ./sling run --src-conn prometheus \

--src-stream 'sum(go_gc_duration_seconds) by (job, instance, quantile) # {"start": "now-2M"}' \

--tgt-conn postgres --tgt-object public.gc_duration_by_job \

--mode full-refresh

Time Filters

Adding time filters, simply add a suffix to your query. Accepts a JSON value with keys start, end and step:

# {"start": "now-2M"}# {"start": "now-2M", "end": "now-1d"}# {"start": "now-2M", "end": "now-1d", "step": "1d"}

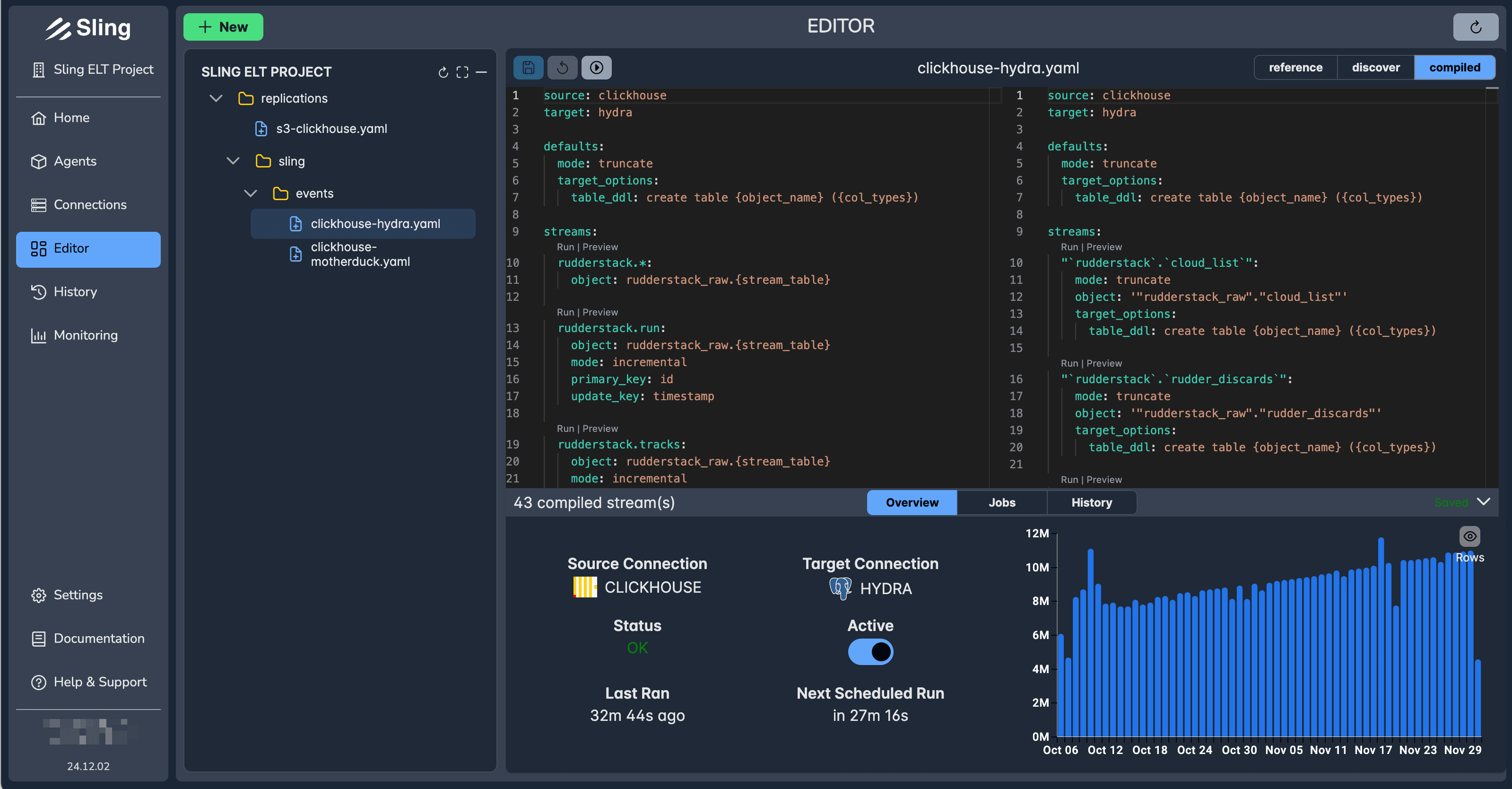

Run with Replication

We can also use a replication. Replications are the best way to use sling in a reusable manner. The defaults key allows reusing your inputs with the ability to override any of them in a particular stream. Both YAML or JSON files are accepted.

# replication.yaml

source: prometheus

target: postgres

defaults:

object: prometheus.{stream_name}

mode: full-refresh

streams:

gc_duration_by_job:

sql: 'sum(go_gc_duration_seconds) by (job, instance, quantile) # {"start": "now-2M", "end": "now-1d", "step": "1d"}'

# incremental load, last 2 days of data, hourly

go_memstats_alloc_bytes_total:

sql: 'sum(go_memstats_alloc_bytes_total) by (job, instance, quantile) # {"start": "now-2d"}'

primary_key: [timestamp, job, instance, quantile]

update_key: timestamp

mode: incremental

We can run the replication like this:

sling run -r replication.yaml

Conclusion

We went over on how easy it was to export data with Sling from Prometheus. Feel free to check out other examples here: https://docs.slingdata.io.